The cheapest ball in the bay can become the most expensive item in the room.

Cheap golf balls tear impact screens when scuffs, dirt, chipped paint, or seam flashing turn the ball’s surface abrasive at impact. In a commercial simulator bay, the real cost is not the ball itself but premature screen wear, bay downtime, lost revenue, and a visibly degraded guest experience.

Indoor golf changed the cost equation. Outdoors, a lost ball is a consumable problem. Indoors, a scuffed or outdoor-used ball starts abrading a revenue-generating asset.

Why Does a Cheap Ball Become a $3,000 Problem?

You think you are saving on OpEx. What you may actually be doing is grinding down a multi-thousand-dollar screen inside a room that sells by the hour.

A cheap simulator ball becomes expensive when it shortens screen life or forces a bay offline. In an indoor business, the real cost sits in premature screen wear, replacement labor, lost booked hours, and a room that starts looking tired before the season is over.

The OpEx Trap

The financial mistake is not buying balls cheaply. The financial mistake is buying balls without pricing the asset they hit.

That distinction matters following the busy 2025–2026 indoor season, because more clubs and venues now treat bays as year-round revenue assets, not side attractions. Once an indoor bay becomes part of the booking engine, the ball is no longer just another line in the consumables budget. It becomes part of the bay-protection system.

Case Study: The $0.20 Saving That Cost $2,350 in 48 Hours

Last winter, I got an email from Steve, a high-end indoor club owner in Denver, who bought cheap refinished range balls to save $0.20 per ball.

The failure hit on a busy Saturday in his VIP Bay. A guest hit a high-spin wedge. The ball’s rough cover and seam flashing acted like abrasive media, slicing clean through the impact screen. That $0.20 saving cost Steve an $850 replacement and $1,500 in refunded bookings over 48 hours of downtime.

He sent us the ball for analysis. The diagnosis was simple: indoor venues sell time, and the ball’s surface protects that asset. We switched him to indoor-spec balls with controlled seams and stable clear coats. The tearing stopped.

The asset being hit is not cheap. Commercial-grade impact-screen systems and pro-level enclosure packages sit firmly in multi-thousand-dollar territory, while the launch-monitor room behind them often represents a much larger investment in hardware, turf, lighting, booking software, and interior build-out. Once that room is bookable by the hour, a ball decision that looks tiny in purchasing can become expensive in operations.

This is the cost lens buyers should use:

| Cost lever | What the buyer thinks is being saved | What actually gets hit | What to measure next |

|---|---|---|---|

| $0.50 ball choice | Lower OpEx per shot | Screen wear and bay uptime | Build a downtime-sensitive TCO model |

| Reused outdoor range balls | Free / already in stock | Screen surface and premium appearance | Separate indoor and outdoor inventory |

| No indoor-ball spec | Fewer purchasing decisions | Replacement-cycle control | Define indoor-only ball criteria |

A club that bills a premium bay by the hour is not operating a fabric wall with a projector. It is operating a revenue system. Once that is true, the question stops being “what is the cheapest ball?” and becomes “what ball is cheapest after wear and downtime are counted?”

Make indoor segregation a written operating rule, not a verbal habit. Require indoor deployment only, and reject any outdoor-used or visibly scuffed inventory from bay use. That one line does more asset protection than another month of arguing over ball price.

✔ True — The ball is part of the bay-protection system

In a commercial simulator room, the ball is no longer just an expendable hitting item. It is one of the few objects repeatedly making high-speed contact with a revenue-generating asset.

✘ False — “We are only saving on balls”

You are also making an implicit decision about screen wear, room appearance, and whether the bay stays open during booked hours.

What Actually Tears an Impact Screen?

Buyers often ask whether real golf balls ruin screens. That is the wrong question.

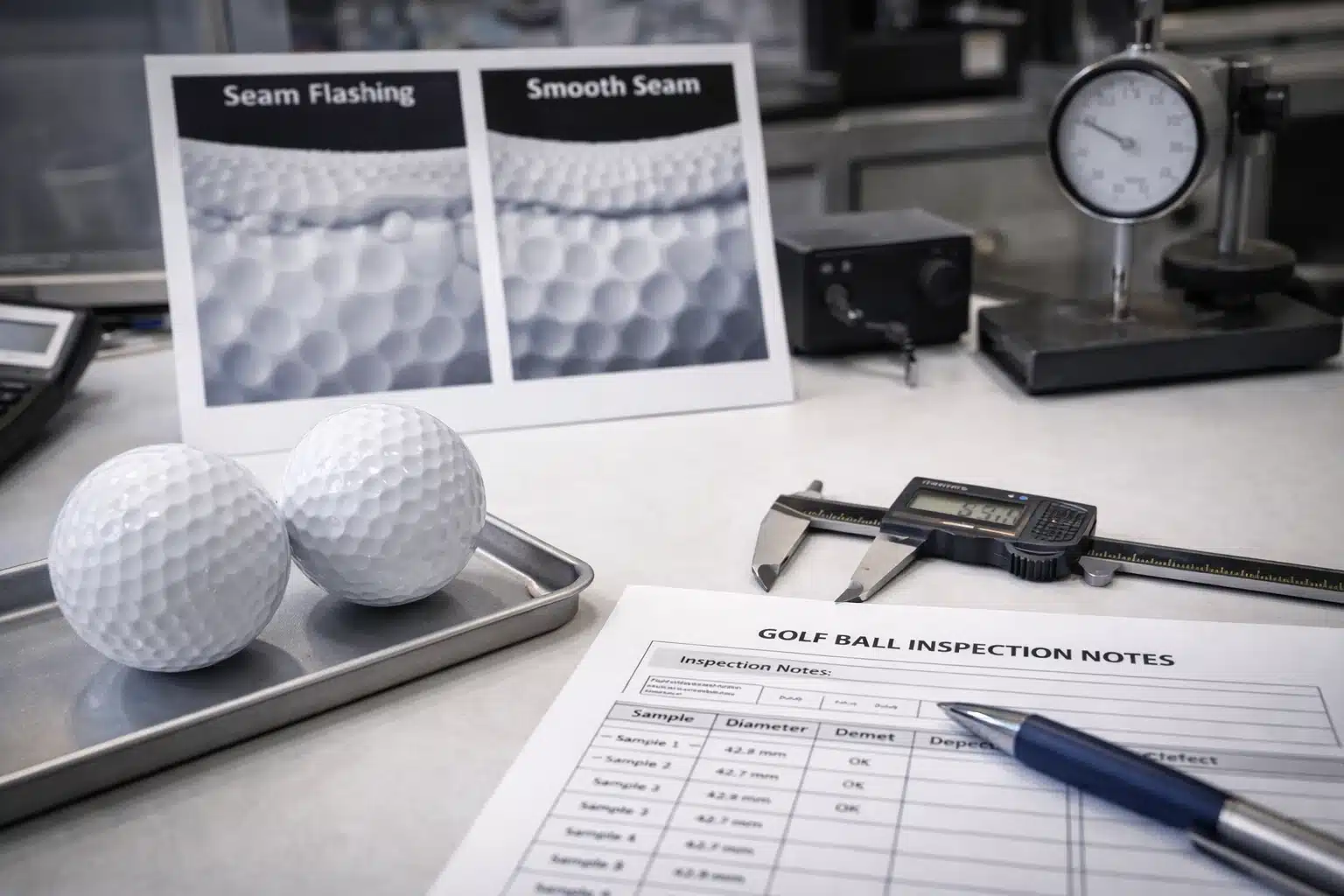

Impact screens tear when a ball’s surface becomes abrasive. Dirt, scuffs, chipped paint, and seam flashing increase friction at impact, especially on high-spin shots, turning the ball from a measurement tool into a wear mechanism.

Flashing and Scuffs: The Abrasion Mechanism

The problem is not “real balls indoors” in the abstract. The problem is the wrong surface condition indoors.

A clean, smooth, controlled ball and a dirty, chipped, slightly rough ball are not remotely the same object from a screen-wear perspective. The first behaves like a controlled test item. The second behaves like a rotating abrasive. That is why indoor-ball choice should be treated as a fit-for-use decision, not just a “can this ball be hit?” decision.

Two failure modes matter most.

The first is seam or flashing risk. If the equator has visible roughness, excess material, or a prominent mold line, the ball can act like a micro-edge at impact. It does not need to look dramatic to matter. Under repeated contact, small surface imperfections become repeated fiber stress.

The second is surface breakdown. Dirt, chips, paint failure, and burr formation can turn the outside of the ball into the indoor version of sandpaper. This gets worse on high-spin shots, where friction lives longer at contact and visible burn-like marks are already part of normal screen wear. That is why keeping clubs and balls clean, rotating balls, and separating indoor from outdoor inventory all reduce wear. They are not housekeeping niceties. They are operating controls.

What the Screen Actually Sees at Impact

-

seam flashing = micro-edge

-

chipped finish = rough abrasive surface

-

dirty or outdoor-used balls = friction contamination

The screen does not care whether the ball was cheap. It cares whether the surface stayed smooth at impact.

This also has a safety angle. Impact screens are not just projection surfaces. They are part of a safety system that must absorb, slow, and control the ball’s energy. The more worn the strike zone becomes, the more bounce-back and rebound risk enters the room. That does not automatically become a legal case, but it absolutely becomes safety concern and liability exposure. In premium bays, that matters to guest confidence as much as it matters to maintenance.

The clean buyer move is simple. Request seam macro photos with scale markers, then ask for repeated-impact surface comparisons on the same ball platform. Inspect the equator. Inspect the post-impact surface. Look for visible flashing, rough equators, chipped finish, or burr formation. If the supplier says “high quality” but cannot show seam proof, that is not evidence. That is mood.

A used outdoor range ball may still produce data. That does not make it acceptable for a premium indoor bay.

How Do You Engineer a Screen-Friendlier Indoor Ball?

A generic supplier will tell you the ball is premium. A useful supplier will tell you why the surface stays smooth.

A screen-friendlier indoor ball starts with a smoother equator, a more stable surface finish, and a compression window that does not make impact unnecessarily harsh. Buyers should approve the ball as an indoor-use system, not just as a generic range ball.

This is where vague quality language needs to become a real indoor-ball specification.

The first variable is mold precision. A better indoor ball starts with a cleaner equator. If the seam is flush or near-flush, the ball is less likely to introduce a cutting edge into repeated screen contact. That does not mean the ball is magically safe forever. It means the buyer has removed one avoidable abrasion source before the ball ever enters the bay.

The second variable is finish stability. A ball that leaves production with a smooth surface but quickly chips, scuffs, or grows rough patches after repeated impact is still the wrong indoor ball. This is why surface-condition proof matters more than a pretty new-ball photo. A controlled clear-coat system should help the surface stay smooth under repeated contact instead of breaking into a rougher, screen-abrasive state.

The third variable is compression window. An ultra-hard distance-oriented feel may not be the best indoor answer just because it is durable. Indoor use is not a longest-drive shelf contest. It is an impact-management environment. A more controlled mid-compression specification can help reduce harshness, moderate rebound behavior, and improve room feel, provided the supplier can state the target and support it with simple validation notes. You do not need pseudo-scientific theater here. You do need a clear statement of what the ball is supposed to be.

This is the indoor spec that matters:

| Indoor-ball variable | Screen risk if wrong | What to verify | Next step |

|---|---|---|---|

| Mold precision | Seam acts like a micro-edge | Macro equator inspection | Require seamless or near-flush equator standard |

| Clear-coat durability | Chips or rough patches abrade the screen | Repeated-impact surface photos | Compare finish before and after test cycle |

| Compression window | Harder feel and harsher energy transfer | Stated compression target + simple rebound or noise note | Choose fit-for-bay spec, not distance-ball spec |

Minimum Indoor-Ball Spec for Premium Bays

-

Equator must be flush or near-flush under macro inspection

-

Surface must remain smooth after repeated-impact testing

-

Compression target must be stated, not implied

-

Indoor-use deployment rules must be written into receiving and bay operations

The goal is not to find a mythical ball that can never damage a screen. It is to define an indoor-use ball that reduces ball-induced abrasion and supports longer screen life.

Process words can also hide meaningful differences. If a supplier says the ball has a protective finish, ask what the finish system actually is and how it is controlled. If a supplier says the ball is smooth, ask whether that means visual smoothness in a hero sample or measured seam condition across a production lot. A mature indoor-ball program is built on proof after impact, not adjectives before impact.

Approval should be tied to the same production candidate used for screen testing. No seam proof, no surface-condition proof, no indoor-use deployment guidance, no approval.

✔ True — Indoor fit matters more than generic quality language

A ball can be fine for outdoor range use and still be poorly matched to indoor screen contact. Indoor approval should be based on seam precision, surface stability, and fit-for-bay compression logic.

✘ False — “Premium quality” is enough information

Not if the supplier cannot explain the seam, finish, and post-impact surface condition of the actual indoor candidate you are being asked to buy.

What Does Bay Downtime Really Cost?

The screen is not the most expensive part of a failure event. The empty bay is.

The true cost of a bad indoor ball is not the ball. It is the combination of replacement-screen spending and lost booked hours while the bay is offline. Once downtime enters the model, cheap balls stop looking cheap.

Lost Hours Matter More Than Ball Price

This is where buyers usually undercount the damage. They treat a torn or badly worn screen as a materials problem. Replace the screen, move on. But the bay does not earn during replacement, setup, inspection, and restart. The revenue system pauses.

That is why this needs a CFO-readable model instead of another maintenance anecdote.

Start with public bay-rate reality. Premium indoor bays commonly rent inside a $50–$100 per hour range. That means even a modest block of lost bookings starts adding up quickly. If eight booked hours are lost, the revenue hit is roughly $400–$800. At twelve hours, it becomes roughly $600–$1,200. At twenty booked hours, the lost revenue reaches about $1,000–$2,000. That is before the screen cost enters the conversation.

Now layer in an illustrative operator model using stated assumptions:

-

bay rate: $75 per hour

-

replacement screen cost: $850

-

booked hours lost per replacement event: 8

-

cheap indoor-ball scenario: 3 screen changes per year

-

controlled indoor-ball scenario: 1 screen change per year

-

cheap ball cost: $0.50 each

-

controlled indoor ball cost: $0.70 each

Under that model, the cheap-ball program appears to save $0.20 per ball. On 10,000 balls, that looks like a $2,000 savings. But screen-and-downtime exposure tells a different story. Three replacement events at $850 plus eight lost hours at $75 create about $4,350 in annual replacement-plus-downtime exposure. One replacement event creates about $1,450. The difference is roughly $2,900 per year before you count the visual degradation of the room.

If downtime rises to twenty booked hours per event, the gap becomes much uglier. Now the screen-and-downtime difference reaches roughly $4,700. That is the moment the “cheap” ball stops being a cost-control decision and starts looking like a false saving.

That is why simulator screens should be priced as part of an operating model, not as stand-alone maintenance fabric. The bay is a revenue asset. The screen is one of its working surfaces. The ball is one of the few inputs repeatedly contacting that surface at speed. Once you see those three together, the procurement logic changes.

Use one editable worksheet with your own numbers, not someone else’s folklore. Plug in your actual bay rate, replacement-screen cost, expected lost hours, and ball cost difference. Then label every replacement-frequency or lifespan number as an operator assumption unless it comes from your own records. That keeps the model honest and still makes the decision painfully clear.

Bay Downtime Control Starts Before the Screen Tears

-

Separate indoor and outdoor ball inventory

-

Define who can approve bay-use ball replacements

-

Track replacement timing and restart timing as revenue fields, not maintenance notes

Write one control line into the operating plan: replacement timing and bay restart timing must be treated as revenue-protection fields, not informal maintenance estimates. That is how you stop downtime from hiding inside “we’ll take care of it next week.”

✔ True — Downtime is usually the bigger financial story

Once a premium bay sells by the hour, booked-hour loss can outrun material savings fast. That is why per-ball price without downtime math is an incomplete purchasing view.

✘ False — “A torn screen only costs the replacement screen”

The room also loses revenue while it is offline, and the guest experience keeps degrading long before a full replacement finally gets approved.

What Proof Should You Demand Before Switching Balls?

If you are protecting an asset, you need proof that survives contact, not a quote that survives email.

Do not switch indoor balls on price or slogans alone. The supplier should prove seam condition, surface durability, batch consistency, and the exact production version being shipped into your bays.

This is where smart buyers separate product language from operating control. A sample kit is not sales theater. It is the buyer’s control system for asset protection.

The first rule is simple: approve one controlled indoor-ball program, not a handful of claims. That means the sample, the surface proof, the seam proof, the QC report, and the incoming lot all need to point to the same production candidate. If they do not, you are not evaluating a program. You are evaluating fragments.

A clean sample does not protect your bays. A controlled production lot does.

If seam proof, repeated-impact proof, and the first shipment lot do not point to the same production version, the buyer is still approving risk, not reducing it.

That is why supplier professionalism matters beyond price. Mature suppliers answer with logic. They explain what was tested, which sample was tested, what the comparison object was, how the production batch was checked, which devices were used, what the calibration reference was, how revision control works, and how the approved proof version follows into production records. Weak suppliers answer with mood. The mockup looks nice. The quote comes fast. The quality language stays vague.

A failure signal here is “high quality” with no seam macro. Another is one clean sample with no deployment rule preventing dirty outdoor inventory from entering the room later. A third is one hero ball with no batch spread at all.

Use this as the minimum proof stack:

| Proof item | Why it matters | Minimum buyer ask | Next step |

|---|---|---|---|

| Seam macro | Reveals flashing risk before impact | Close-up equator images with scale | Reject vague “smooth finish” language |

| Repeated-impact surface proof | Shows whether finish stays smooth | Before/after photo set or method note | Compare cheap and indoor candidate |

| 12-ball QC report | Proves consistency, not one hero sample | Raw values + sigma + range + devices | Attach to approval file |

| Production version control | Stops sample / mass drift | Locked proof version on QC and packing docs | Hold shipment if traceability breaks |

The receiving team needs its own rule set too. Indoor deployment should reject outdoor-used, visibly scuffed, dirty, or rough-surface balls before they ever reach the bucket in a simulator room. If that sounds obvious, good. It should still be written down.

Put one acceptance clause into the PO: Final approved indoor-use golf ball shall match the approved seam condition, surface finish, and compression specification of the pre-production sample, with buyer acceptance based on macro visual inspection, surface-condition method results, and batch QC values for weight, diameter, compression, and visual-defect rate.

Then lock the version path just as tightly: Supplier shall issue one locked indoor-ball proof version before production and reference that version on QC report, batch record, and packing list; any change to molding precision, coating system, compression target, packaging spec, or deployment recommendation requires written buyer approval before shipment.

And use the RFQ to force comparability instead of conversation: Quote one fixed indoor-ball platform across two surface-finish levels and include seam macro photos, surface-condition method after repeated impact, production-batch QC pack for the tested indoor-use SKU, and an indoor-bay TCO planning model based on screen wear, replacement assumptions, and downtime exposure.

The best suppliers do not just answer quickly. They answer logically. They can explain the failure mode, the verification path, the production controls, and the receiving rule without hiding behind “premium quality” fog. In this category, that matters more than a polished website or a low unit price.

FAQ

Do real golf balls ruin simulator screens?

Not automatically. The bigger risk is using dirty, scuffed, chipped, or visibly rough balls indoors instead of a controlled indoor-use ball.

A clean, smooth ball and a damaged ball are not the same thing from a screen-wear perspective. Facilities should separate “real ball” from “bad surface condition.” The correct control is to inspect surface condition, keep indoor and outdoor balls separate, and reject anything visibly worn before it enters a simulator bay.

Can you use outdoor range balls in a simulator?

They may still measure, but that does not make them appropriate for a premium indoor bay.

Outdoor-used balls often carry dirt, hidden scuffs, finish wear, and rougher surface history that create avoidable screen-abrasion risk. Never treat outdoor-used inventory as the indoor default. Create a written receiving and segregation rule so indoor balls stay indoor-only, and reject visibly worn stock before deployment.

How long should an impact screen last?

There is no single universal answer because screen life depends on material, usage intensity, installation, ball condition, and maintenance.

The safer buying language is scenario modeling, not one hard number repeated as industry truth. Use your own records where possible, separate vendor marketing from facility experience, and plug screen-life assumptions into a downtime-sensitive TCO model instead of pretending one lifespan figure fits every bay.

What is the best golf ball to use in a simulator?

The best simulator ball is the one that stays smooth, has controlled seam quality, fits the launch-monitor use case, and does not accelerate screen wear.

“Best” is not a prestige slogan. It is an indoor-use specification. Verify seam condition, verify surface stability after repeated impacts, and verify the compression fit for bay use. A ball that looks fine in the box but roughens quickly in contact is not the best indoor ball.

Why do indoor golf balls scuff so easily?

Often because the finish system is not stable enough for repeated high-speed indoor impacts, or because the balls were already compromised by outdoor use.

The right comparison is not old ball versus new ball. It is stable finish versus unstable finish on the final production candidate. Inspect before and after repeated impacts, ask what the coating system is, and compare different finish-control approaches instead of assuming every glossy ball has the same surface durability.

What should be in a screen-friendly sample kit?

One platform, multiple proofs. The buyer should be able to inspect seam quality, surface condition, and batch logic on the same indoor-ball candidate.

A useful kit includes seam macro, repeated-impact surface proof, QC pack, and a receiving checklist tied to the same indoor-use version being quoted. If those items come from unrelated samples, the kit is incomplete and the decision is still being made on trust rather than proof.

Conclusion

The ball is not a consumable decision anymore. It is part of a bay-protection system.

Per-ball savings can be false savings if they accelerate screen wear, shorten replacement intervals, and push booked hours off the calendar. In a commercial indoor room, that is not cost control. It is delayed expense.

The procurement standard is straightforward: define the indoor-ball spec, verify seam and surface proof, tie approval to batch evidence, and deploy only what the proof stack actually supports.

You might also like — How to Source OEM Golf Balls from China: Specs, MOQ & Lead Time